How rConfig Uses AI Safely: Practical GenAI & MCP Without Exposing Your Data

Every network engineer knows the feeling. You are staring at thousands of lines of configuration code spread across a multi-vendor environment, where a single misplaced character could trigger an outage. The pressure to maintain uptime is immense, and the complexity only grows. Generative AI promises to ease this burden by streamlining diagnostics, automating compliance checks, and even suggesting remediation scripts. Yet, this promise comes with a significant catch: to be useful, the AI needs access to your network’s most sensitive data.

The Security Dilemma of AI-Powered Network Automation

Every network engineer knows the feeling. You are staring at thousands of lines of configuration code spread across a multi-vendor environment, where a single misplaced character could trigger an outage. The pressure to maintain uptime is immense, and the complexity only grows. Generative AI promises to ease this burden by streamlining diagnostics, automating compliance checks, and even suggesting remediation scripts. Yet, this promise comes with a significant catch: to be useful, the AI needs access to your network’s most sensitive data.

For any IT security manager, this presents a fundamental conflict. The idea of sending raw configuration files containing credentials, IP schemes, and security policies to an external AI model is a non-starter. It opens the door to data exfiltration, unauthorized changes, and catastrophic breaches. The challenge is not whether to avoid AI, but how to implement it with a conservative, security-first mindset. True secure AI network management requires a framework built on trust and control.

This guide outlines a practical path forward. It is built on three core principles that allow you to harness AI’s analytical power without compromising your security posture: a strict human-in-the-loop framework, absolute data sovereignty through self-hosting, and controlled data exposure using an abstraction layer.

Establishing a Human-in-the-Loop Framework

The first and most critical safeguard is a non-negotiable human-in-the-loop (HITL) design. In this model, the AI functions as an analytical co-pilot, not an autonomous pilot. It can suggest, report, and analyze, but it is never permitted to execute changes on its own. The human operator remains the final point of authority and accountability, a principle central to any responsible human in the loop AI system. This approach ensures that technology enhances human capability without usurping control.

A practical workflow looks like this:

- Query: An engineer poses a specific question to the system. For example, “Report all devices not compliant with our CIS password complexity baseline.”

- Analysis: The AI scans the relevant configurations internally. It then generates a detailed report or a suggested remediation script to bring the non-compliant devices into alignment.

- Review and Execution: The engineer carefully reviews the AI's output, validating its accuracy and intent. Only after receiving explicit approval does the engineer use the platform’s separate deployment tools to push the change to the network.

This deliberate separation of suggestion from execution is fundamental. For instance, the AI Copilot within rConfig’s GenAI NCM engine is designed to generate these exact kinds of suggestions. However, an operator must then take that output and use a distinct bulk deployment module to implement any changes. This design choice guarantees complete human oversight. The AI provides the insight, but the engineer makes the decision. For more details on how this AI-powered approach works, you can explore the concepts behind our AI engine, which is built around this core philosophy.

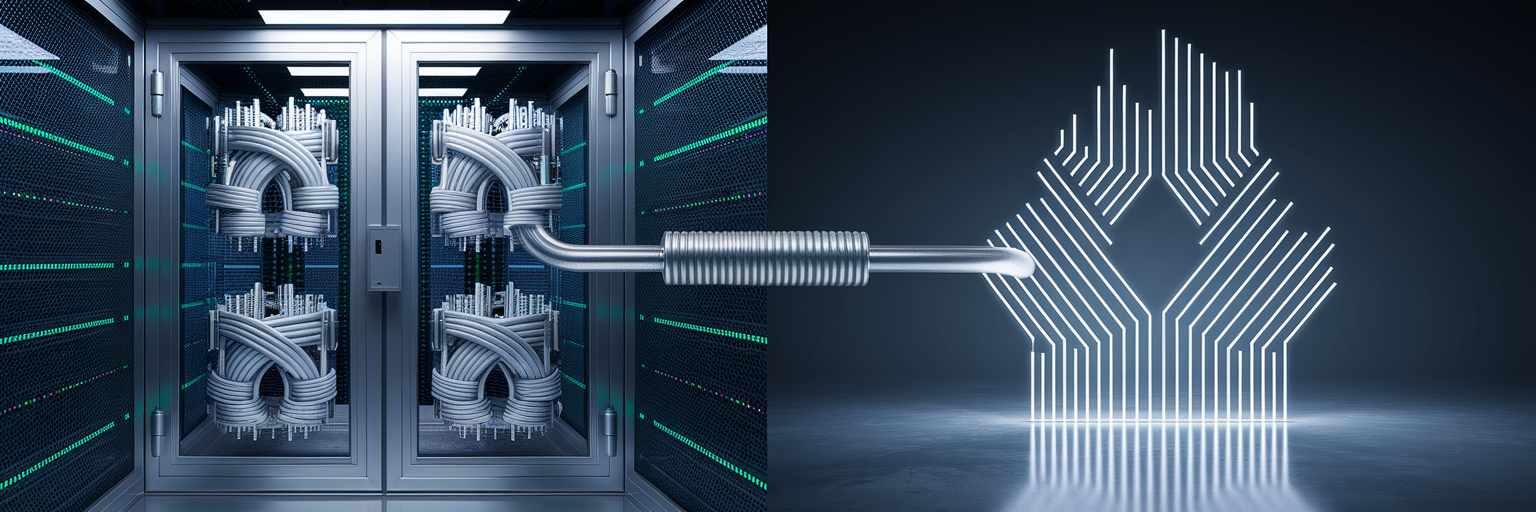

Guaranteeing Data Sovereignty with Self-Hosted Architecture

Beyond human oversight, the physical location of your data is paramount. The concept of data sovereignty dictates that your sensitive information must remain within your organization's control and jurisdiction. Sending raw configuration files to third-party cloud AI services is simply unacceptable for any security-conscious organization. This practice exposes credentials, internal IP addresses, and proprietary security policies to external environments, creating an attack surface outside your control. This is why private AI automation must be grounded in a self-hosted architecture.

Deploying your network configuration management platform on-premise or in a private cloud is the definitive standard. This architecture ensures that all configuration data, analytical processes, and AI queries remain entirely within your security perimeter. Nothing leaves your network. To be effective, a self-hosted solution must also include essential security features:

- Encryption at Rest: All stored configuration backups must be encrypted, protecting them from unauthorized access even if the storage medium is compromised.

- Role-Based Access Control (RBAC): Granular permissions are needed to restrict who can view, query, or manage specific configurations, ensuring users only access what they need.

- Transparent Security Policy: The platform must operate under a clear, auditable policy that governs all data handling and processing.

rConfig's architecture is built to support this on-premise AI for networks model. For example, our V8 Core edition is designed specifically for on-premise deployment, ensuring configuration data and AI queries are processed locally. This approach aligns with a strict data protection posture, as detailed in our security guidelines.

Using Abstracted Metadata to Limit AI Data Exposure

While self-hosting keeps your data within your perimeter, an even more advanced security layer can be added to protect it from the AI model itself. This involves using an abstraction protocol that feeds the AI contextual metadata instead of raw configuration files. The protocol works by analyzing configurations locally and sending only abstracted, non-sensitive information to the AI engine. This could include device state, compliance status (e.g., 'compliant' or 'non-compliant'), or policy identifiers.

With this method, sensitive data like passwords, API keys, or SNMP community strings are never exposed to the model. The AI can still generate highly relevant outputs, such as a Python automation script, because it understands the network's structure and state. It just never "sees" the confidential details that make up that state. This is the core idea behind MCP AI networking, where a Model Context Protocol (MCP) acts as a secure intermediary. MCP allows an AI to generate useful scripts that reference device IDs and compliance baselines, all while the underlying credentials and sensitive parameters remain completely shielded from the model.

| Factor | Direct Data Access (Standard Approach) | Abstracted Metadata (MCP Approach) |

|---|---|---|

| Data Exposure | Full, raw configuration files sent to AI model | Only non-sensitive, abstracted metadata is sent |

| Security Risk | High risk of sensitive data (passwords, keys) exposure | Minimal risk; sensitive data is never seen by the AI |

| AI Context Quality | High, but with significant security trade-offs | High, provides necessary context without compromising data |

| Use Case | General-purpose queries, high-risk analysis | Secure script generation, compliance checks, diagnostics |

This table contrasts two methods for feeding data to an AI, highlighting how an abstracted metadata protocol like MCP provides sufficient context for intelligent automation while fundamentally minimizing security risks associated with direct data exposure. You can learn more about how our Vector product implements this technology.

Achieving Verifiable Compliance and Safe Change Management

These security principles directly enable a more practical and verifiable approach to compliance automation. An on-premise, read-only AI can efficiently cross-reference thousands of live configurations against industry benchmarks like NIST-800-53 or CIS, flagging deviations in seconds. This transforms a tedious manual task into a continuous, automated process. However, for this to be useful in a regulated environment, it must be fully traceable.

A secure GenAI NCM platform must create an immutable audit trail for every action. This log should capture the initial query, the AI's findings, the generated suggestion, and the final decision made by the human operator. This level of detail satisfies the stringent documentation mandates from regulations like ISO 27001 and provides clear evidence to auditors. Furthermore, even with human approval, changes can have unintended consequences. A robust rollback mechanism is essential. If an AI-suggested change causes an issue, the system must allow for instant restoration to the last known stable version.

rConfig's platform addresses these needs directly. Features like our bulk deployment module provide a complete audit trail for every change, documenting who did what and when. This is paired with a built-in engine for immediate recovery, ensuring that network stability is always maintained.

Mitigating Inherent LLM Risks and Looking Ahead

Even with these safeguards, it is important to acknowledge the inherent risks of Large Language Models (LLMs). One specific concern is their potential to "memorize" and inadvertently leak snippets of data they are trained on. A responsible AI implementation must actively mitigate this risk. Advanced platforms address this by strictly training the AI model on synthetic network data and abstract patterns, ensuring it is never exposed to live customer configurations during its foundational training phase.

Continuous internal audits and providing open-source tooling can further empower organizations to verify the AI pipeline themselves, building greater trust through transparency. This leads to a reproducible blueprint for adopting AI in network management safely:

- A strictly read-only AI with a human-in-the-loop design.

- An abstraction layer like MCP to provide context without data exposure.

- A self-hosted architecture to guarantee complete data sovereignty.

This framework allows the industry to adopt AI's powerful analytical benefits without making dangerous security compromises. It represents a responsible, practical path forward for the future of secure AI network management. For a broader overview of these principles in action, our website offers further resources.

About the Author

rConfig

All at rConfig

The rConfig Team is a collective of network engineers and automation experts. We build tools that manage millions of devices worldwide, focusing on speed, compliance, and reliability.

More about rConfig TeamRead Next

AI, Configs, and Data Sovereignty: Who Owns Your Network Intelligence?

From Copilot to Chaos: The Real Pitfalls of AI Driven Network Automation